1.4 KiB

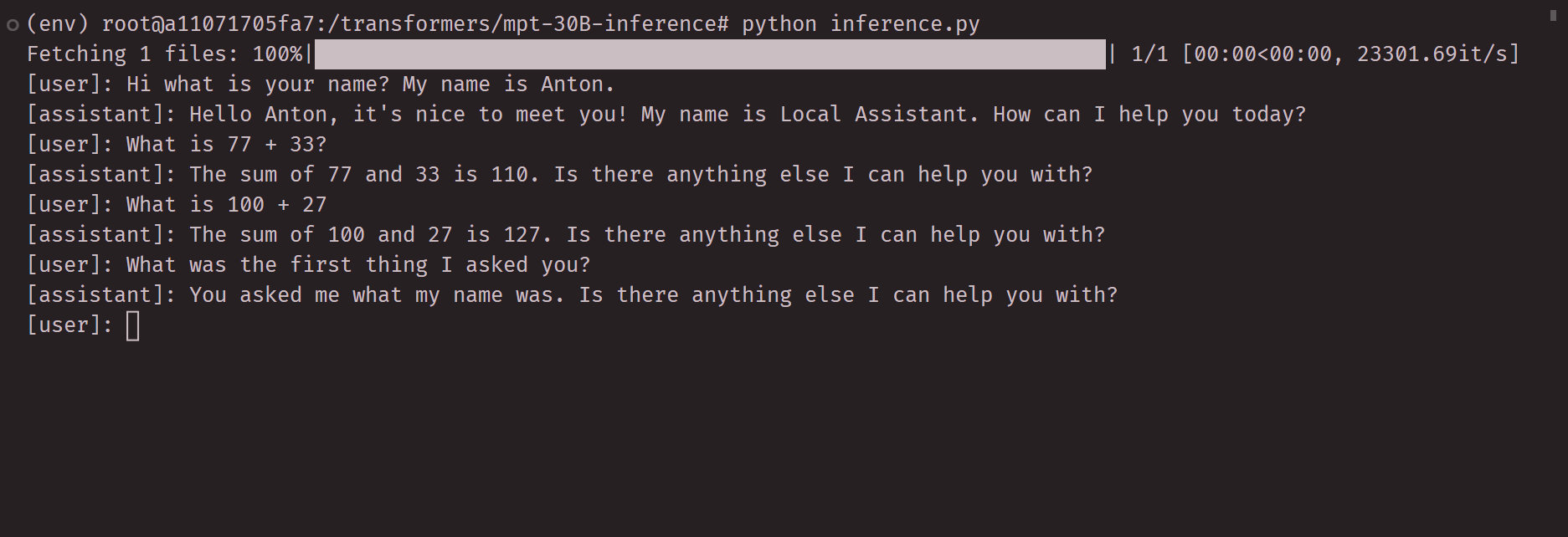

MPT 7B inference code using CPU

Run inference on the latest MPT-7B model using your CPU and just 8gb of ram. If you have more ram (32gb), then you should check out the original repo which has a much larger LLM. This inference code uses a ggml quantized model. To run the model we'll use a library called ctransformers that has bindings to ggml in python.

Turn style with history on latest commit:

Video of initial demo:

Requirements

I recommend you use docker for this model, it will make everything easier for you. Minimum specs system with 8GB of ram. Recommend to use python 3.10.

Tested working on

AMD Ryzen 3750h with 16GB RAM, running Ubuntu 22.04 LTS. Runs fine, if not the fastest.

Setup

First create a venv.

python -m venv env && source env/bin/activate

Next install dependencies.

pip install -r requirements.txt

Next download the quantized model weights (about 4GB).

python download_model.py

Ready to rock, run inference.

python inference.py

Next modify inference script prompt and generation parameters.